# Riteway

[](https://github.com/paralleldrive/aidd)[](https://paralleldrive.com)

**The standard testing framework for AI Driven Development (AIDD) and software agents.**

Riteway is a testing assertion style and philosophy which leads to simple, readable, helpful unit tests for humans and AI agents.

It lets you write better, more readable tests with a fraction of the code that traditional assertion frameworks would use, and the `riteway ai` CLI lets you write AI agent prompt evals as easily as you would write a unit testing suite.

Riteway is the AI-native way to build a modern test suite. It pairs well with Vitest, Playwright, Claude Code, Cursor Agent, Google Antigravity, and more.

* **R**eadable

* **I**solated/**I**ntegrated

* **T**horough

* **E**xplicit

Riteway forces you to write **R**eadable, **I**solated, and **E**xplicit tests, because that's the only way you can use the API. It also makes it easier to be thorough by making test assertions so simple that you'll want to write more of them.

## Why Riteway for AI Driven Development?

Riteway's structured approach makes it ideal for AIDD:

**📖 Learn more:** [Better AI Driven Development with Test Driven Development](https://medium.com/effortless-programming/better-ai-driven-development-with-test-driven-development-d4849f67e339)

- **Clear requirements**: The given, should expectations and 5-question framework help AI better understand exactly what to build

- **Readable by design**: Natural language descriptions make tests comprehensible to both humans and AI

- **Simple API**: Minimal surface area reduces AI confusion and hallucinations

- **Token efficient**: Concise syntax saves valuable context window space

## The 5 Questions Every Test Must Answer

There are [5 questions every unit test must answer](https://medium.com/javascript-scene/what-every-unit-test-needs-f6cd34d9836d). Riteway forces you to answer them.

1. What is the unit under test (module, function, class, whatever)?

2. What should it do? (Prose description)

3. What was the actual output?

4. What was the expected output?

5. How do you reproduce the failure?

## Installing

```shell

npm install --save-dev riteway

```

Then add an npm command in your package.json:

```json

"test": "riteway test/**/*-test.js",

```

For projects using both core Riteway tests and JSX component tests, you can use a dual test runner setup:

```json

"test": "node source/test.js && vitest run",

```

Now you can run your tests with `npm test`. Riteway also supports full TAPE-compatible usage syntax, so you can have an advanced entry that looks like:

```json

"test": "nyc riteway test/**/*-rt.js | tap-nirvana",

```

In this case, we're using [nyc](https://www.npmjs.com/package/nyc), which generates test coverage reports. The output is piped through an advanced TAP formatter, [tap-nirvana](https://www.npmjs.com/package/tap-nirvana) that adds color coding, source line identification and advanced diff capabilities.

### Requirements

Riteway requires Node.js 16+ and uses native ES modules. Add `"type": "module"` to your package.json to enable ESM support. For JSX component testing, you'll need a build tool that can transpile JSX (see [JSX Setup](#jsx-setup) below).

## `riteway ai` — AI Prompt Evaluations

The `riteway ai` CLI runs your AI agent prompt evaluations against a configurable pass-rate threshold. Write a `.sudo` test file, run it through any supported AI agent, and get a TAP-formatted report with per-assertion pass rates across multiple runs.

### Authentication

All agents use OAuth authentication — no API keys needed. Authenticate once before running evals:

| Agent | Command | Docs |

|-------|---------|------|

| Claude | `claude setup-token` | [Claude Code docs](https://docs.anthropic.com/en/docs/claude-code) |

| Cursor | `agent login` | [Cursor docs](https://docs.cursor.com/context/rules-for-ai) |

| OpenCode | See docs | [opencode.ai/docs/cli](https://opencode.ai/docs/cli/) |

### Writing a test file

AI evals are written in `.sudo` files using [SudoLang](https://github.com/paralleldrive/sudolang) syntax:

```

# my-feature-test.sudo

import 'path/to/spec.mdc'

userPrompt = """

Implement the sum function as described.

"""

- Given the spec, should name the function sum

- Given the spec, should accept two parameters named a and b

- Given the spec, should return the correct sum of the two parameters

```

Each `- Given ..., should ...` line becomes an independently judged assertion. The agent is asked to respond to the `userPrompt` (with any imported spec as context), and a judge agent scores each assertion across all runs.

### Running an eval

```shell

riteway ai path/to/my-feature-test.sudo

```

By default this runs **4 passes**, requires **75% pass rate**, uses the **claude** agent, runs up to **4 tests concurrently**, and allows **300 seconds** per agent call.

```shell

# Specify runs, threshold, and agent

riteway ai path/to/test.sudo --runs 10 --threshold 80 --agent opencode

# Use a Cursor agent with color output

riteway ai path/to/test.sudo --agent cursor --color

# Use a custom agent config file (mutually exclusive with --agent)

riteway ai path/to/test.sudo --agent-config ./my-agent.json

```

### Options

| Flag | Default | Description |

|------|---------|-------------|

| `--runs N` | `4` | Number of passes per assertion |

| `--threshold P` | `75` | Required pass percentage (0–100) |

| `--timeout MS` | `300000` | Per-agent-call timeout in milliseconds |

| `--agent NAME` | `claude` | Agent: `claude`, `opencode`, `cursor`, or a custom name from `riteway.agent-config.json` |

| `--agent-config FILE` | — | Path to a flat single-agent JSON config `{"command","args","outputFormat"}` — mutually exclusive with `--agent` |

| `--concurrency N` | `4` | Max concurrent test executions |

| `--color` | off | Enable ANSI color output |

| `--save-responses` | off | Save raw agent responses and judge details to a companion `.responses.md` file |

Results are written as a TAP markdown file under `ai-evals/` in the project root.

### Saving raw responses for debugging

When `--save-responses` is passed, a companion `.responses.md` file is written alongside the `.tap.md` output. It contains the raw result agent response and per-run judge details (passed, actual, expected, score) for every assertion — useful for debugging failures without adding console noise.

```shell

riteway ai path/to/test.sudo --save-responses

```

Each test file produces its own uniquely-named pair of files (e.g. `2026-03-17-test-abc12.tap.md` and `2026-03-17-test-abc12.responses.md`), so multiple test files never conflict.

#### Capturing responses as CI artifacts

In GitHub Actions, use `--save-responses` and upload the `ai-evals/` directory as an artifact:

```yaml

- name: Run AI prompt evaluations

run: npx riteway ai path/to/test.sudo --save-responses

- name: Upload AI eval responses

if: always()

uses: actions/upload-artifact@v4

with:

name: ai-eval-responses

path: ai-evals/*.responses.md

retention-days: 14

```

The `if: always()` ensures responses are uploaded even when assertions fail, so you can inspect exactly what the agent produced.

### Custom agent configuration

`riteway ai init` writes all built-in agent configs to `riteway.agent-config.json` in your project root, so you can add custom agents or tweak existing flags:

```shell

riteway ai init # create riteway.agent-config.json

riteway ai init --force # overwrite existing file

```

The generated file is a keyed registry. Add a custom agent entry and use it with `--agent`:

```json

{

"claude": { "command": "claude", "args": ["-p", "--output-format", "json", "--no-session-persistence"], "outputFormat": "json" },

"opencode": { "command": "opencode", "args": ["run", "--format", "json"], "outputFormat": "ndjson" },

"cursor": { "command": "agent", "args": ["--print", "--output-format", "json"], "outputFormat": "json" },

"my-agent": { "command": "my-tool", "args": ["--json"], "outputFormat": "json" }

}

```

```shell

riteway ai path/to/test.sudo --agent my-agent

```

Once `riteway.agent-config.json` exists, any agent key defined in it supersedes the library's built-in defaults for that agent.

---

## Example Usage

```js

import { describe, Try } from 'riteway/index.js';

// a function to test

const sum = (...args) => {

if (args.some(v => Number.isNaN(v))) throw new TypeError('NaN');

return args.reduce((acc, n) => acc + n, 0);

};

describe('sum()', async assert => {

const should = 'return the correct sum';

assert({

given: 'no arguments',

should: 'return 0',

actual: sum(),

expected: 0

});

assert({

given: 'zero',

should,

actual: sum(2, 0),

expected: 2

});

assert({

given: 'negative numbers',

should,

actual: sum(1, -4),

expected: -3

});

assert({

given: 'NaN',

should: 'throw',

actual: Try(sum, 1, NaN),

expected: new TypeError('NaN')

});

});

```

### Testing React Components

```js

import render from 'riteway/render-component';

import { describe } from 'riteway/index.js';

describe('renderComponent', async assert => {

const $ = render(testing

);

assert({

given: 'some jsx',

should: 'render markup',

actual: $('.foo').html().trim(),

expected: 'testing'

});

});

```

> Note: JSX component testing requires transpilation. See the [JSX Setup](#jsx-setup) section below for configuration with Vite or Next.js.

Riteway makes it easier than ever to test pure React components using the `riteway/render-component` module. A pure component is a component which, given the same inputs, always renders the same output.

I don't recommend unit testing stateful components, or components with side-effects. Write functional tests for those, instead, because you'll need tests which describe the complete end-to-end flow, from user input, to back-end-services, and back to the UI. Those tests frequently duplicate any testing effort you would spend unit-testing stateful UI behaviors. You'd need to do a lot of mocking to properly unit test those kinds of components anyway, and that mocking may cover up problems with too much coupling in your component. See ["Mocking is a Code Smell"](https://medium.com/javascript-scene/mocking-is-a-code-smell-944a70c90a6a) for details.

A great alternative is to encapsulate side-effects and state management in container components, and then pass state into pure components as props. Unit test the pure components and use functional tests to ensure that the complete UX flow works in real browsers from the user's perspective.

#### Isolating React Unit Tests

When you [unit test React components](https://medium.com/javascript-scene/unit-testing-react-components-aeda9a44aae2) you frequently have to render your components many times. Often, you want different props for some tests.

Riteway makes it easy to isolate your tests while keeping them readable by using [factory functions](https://link.medium.com/WxHPhCc3OV) in conjunction with [block scope](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Statements/block).

```js

import ClickCounter from '../click-counter/click-counter-component';

describe('ClickCounter component', async assert => {

const createCounter = clickCount =>

render()

;

{

const count = 3;

const $ = createCounter(count);

assert({

given: 'a click count',

should: 'render the correct number of clicks.',

actual: parseInt($('.clicks-count').html().trim(), 10),

expected: count

});

}

{

const count = 5;

const $ = createCounter(count);

assert({

given: 'a click count',

should: 'render the correct number of clicks.',

actual: parseInt($('.clicks-count').html().trim(), 10),

expected: count

});

}

});

```

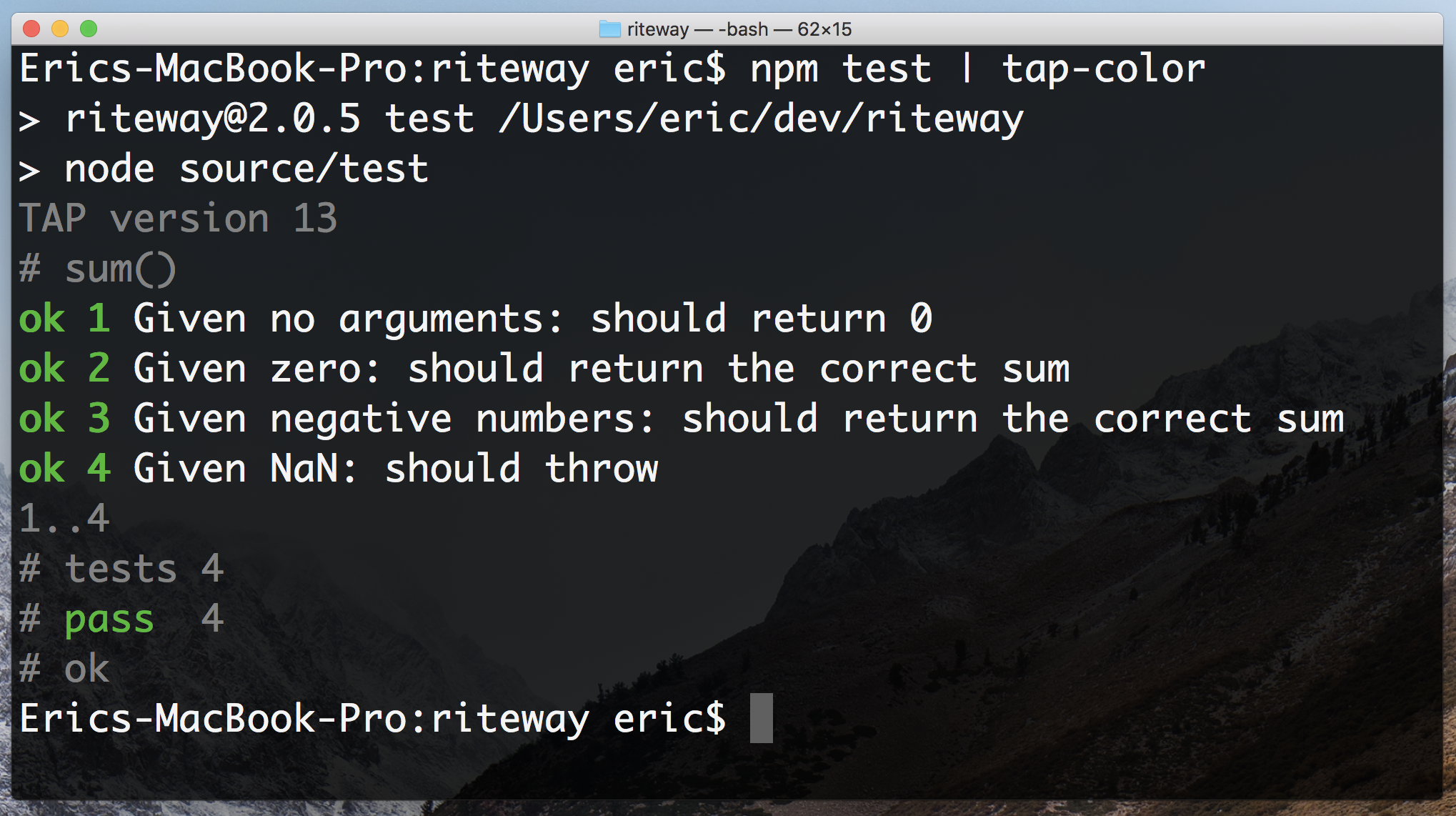

## Output

Riteway produces standard TAP output, so it's easy to integrate with just about any test formatter and reporting tool. (TAP is a well established standard with hundreds (thousands?) of integrations).

```shell

TAP version 13

# sum()

ok 1 Given no arguments: should return 0

ok 2 Given zero: should return the correct sum

ok 3 Given negative numbers: should return the correct sum

ok 4 Given NaN: should throw

1..4

# tests 4

# pass 4

# ok

```

Prefer colorful output? No problem. The standard TAP output has you covered. You can run it through any TAP formatter you like:

```shell

npm install -g tap-color

npm test | tap-color

```

## API

### describe

```js

describe = (unit: String, cb: TestFunction) => Void

```

Describe takes a prose description of the unit under test (function, module, whatever), and a callback function (`cb: TestFunction`). The callback function should be an [async function](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Statements/async_function) so that the test can automatically complete when it reaches the end. Riteway assumes that all tests are asynchronous. Async functions automatically return a promise in JavaScript, so Riteway knows when to end each test.

### describe.only

```js

describe.only = (unit: String, cb: TestFunction) => Void

```

Like Describe, but don't run any other tests in the test suite. See [test.only](https://github.com/substack/tape#testonlyname-cb)

### describe.skip

```js

describe.skip = (unit: String, cb: TestFunction) => Void

```

Skip running this test. See [test.skip](https://github.com/substack/tape#testskipname-cb)

### TestFunction

```js

TestFunction = assert => Promise

```

The `TestFunction` is a user-defined function which takes `assert()` and must return a promise. If you supply an async function, it will return a promise automatically. If you don't, you'll need to explicitly return a promise.

Failure to resolve the `TestFunction` promise will cause an error telling you that your test exited without ending. Usually, the fix is to add `async` to your `TestFunction` signature, e.g.:

```js

describe('sum()', async assert => {

/* test goes here */

});

```

### assert

```js

assert = ({

given = Any,

should = '',

actual: Any,

expected: Any

} = {}) => Void, throws

```

The `assert` function is the function you call to make your assertions. It takes prose descriptions for `given` and `should` (which should be strings), and invokes the test harness to evaluate the pass/fail status of the test. Unless you're using a custom test harness, assertion failures will cause a test failure report and an error exit status.

Note that `assert` uses [a deep equality check](https://github.com/substack/node-deep-equal) to compare the actual and expected values. Rarely, you may need another kind of check. In those cases, pass a JavaScript expression for the `actual` value.

### createStream

```js

createStream = ({ objectMode: Boolean }) => NodeStream

```

Create a stream of output, bypassing the default output stream that writes messages to `console.log()`. By default the stream will be a text stream of TAP output, but you can get an object stream instead by setting `opts.objectMode` to `true`.

```js

import { describe, createStream } from 'riteway/index.js';

createStream({ objectMode: true }).on('data', function (row) {

console.log(JSON.stringify(row))

});

describe('foo', async assert => {

/* your tests here */

});

```

### countKeys

Given an object, return a count of the object's own properties.

```js

countKeys = (Object) => Number

```

This function can be handy when you're adding new state to an object keyed by ID, and you want to ensure that the correct number of keys were added to the object.

### Try

```js

Try = (fn, ...args) => Error | Any

```

Execute a function with the given arguments and return any error thrown, or the function's return value if no error occurs. This utility is designed for testing error cases in your assertions.

`Try` handles both synchronous errors (via try/catch) and asynchronous errors (via promise rejection), making it ideal for testing functions that throw exceptions or return rejected promises.

#### Example: Testing Synchronous Errors

```js

const sum = (...args) => {

if (args.some(v => Number.isNaN(v))) throw new TypeError('NaN');

return args.reduce((acc, n) => acc + n, 0);

};

describe('sum()', async assert => {

assert({

given: 'NaN',

should: 'throw TypeError',

actual: Try(sum, 1, NaN),

expected: new TypeError('NaN')

});

});

```

#### Example: Testing Asynchronous Errors

```js

const fetchUser = async (id) => {

if (!id) throw new Error('ID required');

return await fetch(`/api/users/${id}`);

};

describe('fetchUser()', async assert => {

assert({

given: 'no ID',

should: 'throw an error',

actual: await Try(fetchUser),

expected: new Error('ID required')

});

});

```

## Render Component

First, import `render` from `riteway/render-component`:

```js

import render from 'riteway/render-component';

```

```js

render = (jsx) => CheerioObject

```

Take a JSX object and return a [Cheerio object](https://cheerio.js.org/), a partial implementation of the jQuery core API which makes selecting from your rendered JSX markup just like selecting with jQuery or the `querySelectorAll` API.

### Example

```js

describe('MyComponent', async assert => {

const $ = render();

assert({

given: 'no params',

should: 'render something with the my-component class',

actual: $('.my-component').length,

expected: 1

});

});

```

## Match

First, import `match` from `riteway/match`:

```js

import match from 'riteway/match.js';

```

```js

match = text => pattern => String

```

Take some text to search and return a function which takes a pattern and returns the matched text, if found, or an empty string. The pattern can be a string or regular expression.

### Example

Imagine you have a React component you need to test. The component takes some text and renders it in some div contents. You need to make sure that the passed text is getting rendered.

```js

const MyComponent = ({text}) => {text}

;

```

You can use match to create a new function that will test to see if your search

text contains anything matching the pattern you passed in. Writing tests this way

allows you to see clear expected and actual values, so you can expect the specific

text you're expecting to find:

```js

describe('MyComponent', async assert => {

const text = 'Test for whatever you like!';

const $ = render();

const contains = match($('.contents').html());

assert({

given: 'some text to display',

should: 'render the text.',

actual: contains(text),

expected: text

});

});

```

## JSX Setup

For JSX component testing, you need a build tool that can transpile JSX. We recommend **Vite** or **Next.js**, both of which handle JSX out of the box.

### Option 1: Vite Setup

[Vite](https://vitejs.dev/) provides excellent JSX support with minimal configuration:

1. **Install Vite, Vitest, and React plugin:**

```bash

npm install --save-dev vite vitest @vitejs/plugin-react

```

2. **Create `vite.config.js` in your project root:**

```javascript

import { defineConfig } from 'vite';

import react from '@vitejs/plugin-react';

export default defineConfig({

plugins: [react()],

});

```

3. **Update your package.json test script:**

```json

{

"scripts": {

"test": "vitest run"

}

}

```

Note: Vitest configuration is optional. The above setup will work with default settings.

### Option 2: Next.js Setup

[Next.js](https://nextjs.org/) handles JSX transpilation automatically. No additional configuration needed for JSX support.

## Vitest

[Vitest](https://vitest.dev/guide/) is a [Vite](https://vitejs.dev/) plugin through which you can run Riteway tests. It's a great way to get started with Riteway because it's easy to set up and fast. It also runs tests in real browsers, so you can test standard web components.

### Installing

First you will need to install Vitest. You will also need to install Riteway into your project if you have not already done so. You can use any package manager you like:

```shell

npm install --save-dev vitest

```

### Usage

First, import `assert` from `riteway/vitest` and `describe` from `vitest`:

```ts

import { assert } from 'riteway/vitest';

import { describe, test } from "vitest";

```

Then you can use the Vitest runner to test. You can run `npx vitest` directly or add a script to your package.json. See [here](https://vitest.dev/config/) for additional details on setting up a Vitest configuration.

When using vitest, you should wrap your asserts inside a test function so that vitest can understand where your tests failed when it encounters a failure.

```ts

// a function to test

const sum = (...args) => {

if (args.some(v => Number.isNaN(v))) throw new TypeError('NaN');

return args.reduce((acc, n) => acc + n, 0);

};

describe('sum()', () => {

test('basic summing', () => {

assert({

given: 'no arguments',

should: 'return 0',

actual: sum(),

expected: 0

});

assert({

given: 'two numbers',

should: 'return the correct sum',

actual: sum(2, 0),

expected: 2

});

});

});

```

## Bun

[Bun](https://bun.sh/) has a fast, built-in test runner that is Jest-compatible. Riteway provides a Bun adapter so you can use the familiar `assert` API with Bun's test runner.

### Installing

First, make sure you have Bun installed. Then install Riteway into your project:

```shell

bun add --dev riteway

```

### Setup

Before using `assert`, you need to call `setupRitewayBun()` once to register the custom matcher. We recommend doing this in a global setup file using Bun's `preload` option.

Create a setup file (e.g., `test/setup.ts`):

```ts

import { setupRitewayBun } from 'riteway/bun';

setupRitewayBun();

```

Then configure Bun to preload it. Add to your `bunfig.toml`:

```toml

[test]

preload = ["./test/setup.ts"]

```

Or specify it via CLI:

```shell

bun test --preload ./test/setup.ts

```

### Usage

In your test files, import `test`, `describe`, and `assert` from `riteway/bun`:

```ts

import { test, describe, assert } from 'riteway/bun';

```

Then run your tests with `bun test`:

```shell

bun test

```

### Example

```ts

import { test, describe, assert } from 'riteway/bun';

// a function to test

const sum = (...args) => {

if (args.some(v => Number.isNaN(v))) throw new TypeError('NaN');

return args.reduce((acc, n) => acc + n, 0);

};

describe('sum()', () => {

test('given: no arguments, should: return 0', () => {

assert({

given: 'no arguments',

should: 'return 0',

actual: sum(),

expected: 0

});

});

test('given: two numbers, should: return the correct sum', () => {

assert({

given: 'two numbers',

should: 'return the correct sum',

actual: sum(2, 3),

expected: 5

});

});

});

```

### Failure Output

When a test fails, the error message includes the `given` and `should` context:

```

error: Given two different numbers: should be equal

Expected: 43

Received: 42

```