SuSiE z Estimate Prior Variance Methods Comparison

Yuxin Zou

2019-02-17

Last updated: 2019-02-17

workflowr checks: (Click a bullet for more information)-

✔ R Markdown file: up-to-date

Great! Since the R Markdown file has been committed to the Git repository, you know the exact version of the code that produced these results.

-

✔ Environment: empty

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

-

✔ Seed:

set.seed(20190115)The command

set.seed(20190115)was run prior to running the code in the R Markdown file. Setting a seed ensures that any results that rely on randomness, e.g. subsampling or permutations, are reproducible. -

✔ Session information: recorded

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

-

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility. The version displayed above was the version of the Git repository at the time these results were generated.✔ Repository version: 1dbbe5a

Note that you need to be careful to ensure that all relevant files for the analysis have been committed to Git prior to generating the results (you can usewflow_publishorwflow_git_commit). workflowr only checks the R Markdown file, but you know if there are other scripts or data files that it depends on. Below is the status of the Git repository when the results were generated:

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.Ignored files: Ignored: .DS_Store Ignored: .Rhistory Ignored: .Rproj.user/ Ignored: .sos/ Ignored: data/.DS_Store Ignored: output/.DS_Store Untracked files: Untracked: docs/figure/test.Rmd/ Untracked: output/dsc_susie_z_v_output.rds Untracked: output/dscoutProblem475.rds Untracked: output/dscoutProblem75.rds

Expand here to see past versions:

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | 1dbbe5a | zouyuxin | 2019-02-17 | wflow_publish(“analysis/SusieZPriorVarCompare.Rmd”) |

library(dscrutils)

dscout = dscquery('susie_z_v', target='sim_gaussian.pve sim_gaussian.n_signal sim_gaussian.effect_weight susie_z_uniroot.L susie_z_em.L susie_z_optim.L susie_z_uniroot.optimV_method susie_z_em.optimV_method susie_z_optim.optimV_method score_susie.objective score_susie.converged score_susie.total score_susie.valid susie_z_uniroot.DSC_TIME susie_z_em.DSC_TIME susie_z_optim.DSC_TIME')

colnames(dscout) = c('DSC', 'pve', 'n_signal', 'effect_weight', 'L_uniroot', 'method_uniroot','Time_uniroot', 'L_em', 'method_em', 'Time_em', 'L_optim', 'method_optim', 'Time_optim', 'objective', 'converged', 'total', 'valid')

dscout$effect_weight[which(dscout$effect_weight == 'rep(1/n_signal, n_signal)')] = 'equal'

dscout$effect_weight[which(dscout$effect_weight != 'equal')] = 'notequal'

method = dscout$method_uniroot

method[dscout$method_em == 'EM'] = 'em'

method[dscout$method_optim == 'optim'] = 'optim'

L = dscout$L_uniroot

L[!is.na(dscout$L_em)] = dscout$L_em[!is.na(dscout$L_em)]

L[!is.na(dscout$L_optim)] = dscout$L_optim[!is.na(dscout$L_optim)]

Time = dscout$Time_uniroot

Time[!is.na(dscout$Time_em)] = dscout$Time_em[!is.na(dscout$Time_em)]

Time[!is.na(dscout$Time_optim)] = dscout$Time_optim[!is.na(dscout$Time_optim)]

dscout = cbind(dscout, method, L, Time)

dscout = dscout[, -c(5:13)]library(dplyr)

Attaching package: 'dplyr'The following objects are masked from 'package:stats':

filter, lagThe following objects are masked from 'package:base':

intersect, setdiff, setequal, unionlibrary(knitr)

library(kableExtra)

dscout = readRDS('output/dsc_susie_z_v_output.rds')We randomly generate X from N(0,1), n = 1200, p = 1000.

We randomly generate the response based on different number of signals (1, 3, 5, 10), pve (0.01, 0.2, 0.6, 0.8), whether the signals have the same effect size. We fit SuSiE model with L = 5 and 10. We perform simulations to compare three methods uniroot, em and optim. There are 100 replicates in the simulation. Therefore 19200 models in total.

All SuSiE models converge.

sum(dscout$converged)[1] 19200Whether fit objectives of EM and optim are higher than that of uniroot?

uniroot.obj = dscout$objective[dscout$method == 'uniroot']

em.obj = dscout$objective[dscout$method == 'em']

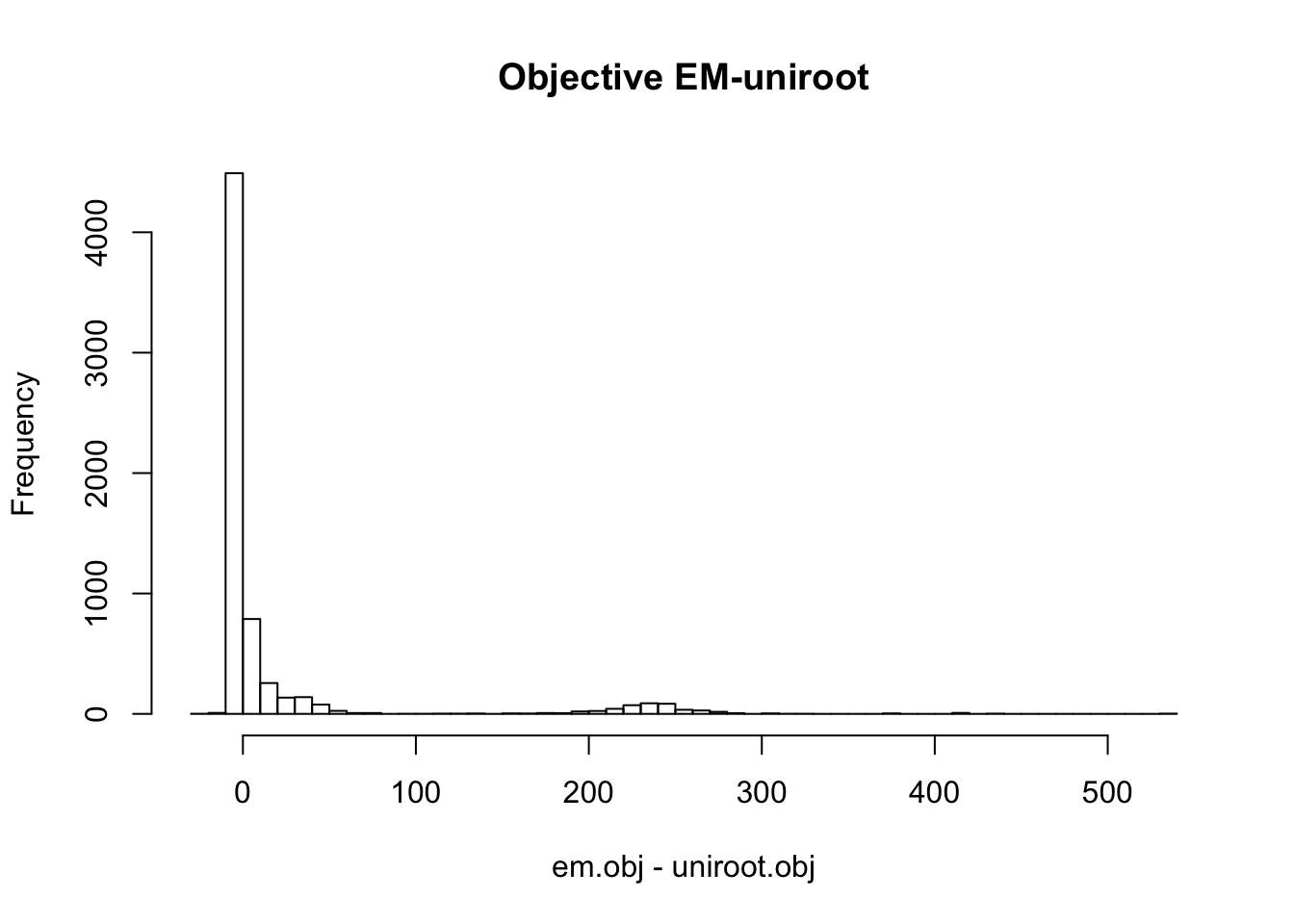

optim.obj = dscout$objective[dscout$method == 'optim']hist(em.obj - uniroot.obj, main='Objective EM-uniroot', breaks=50)

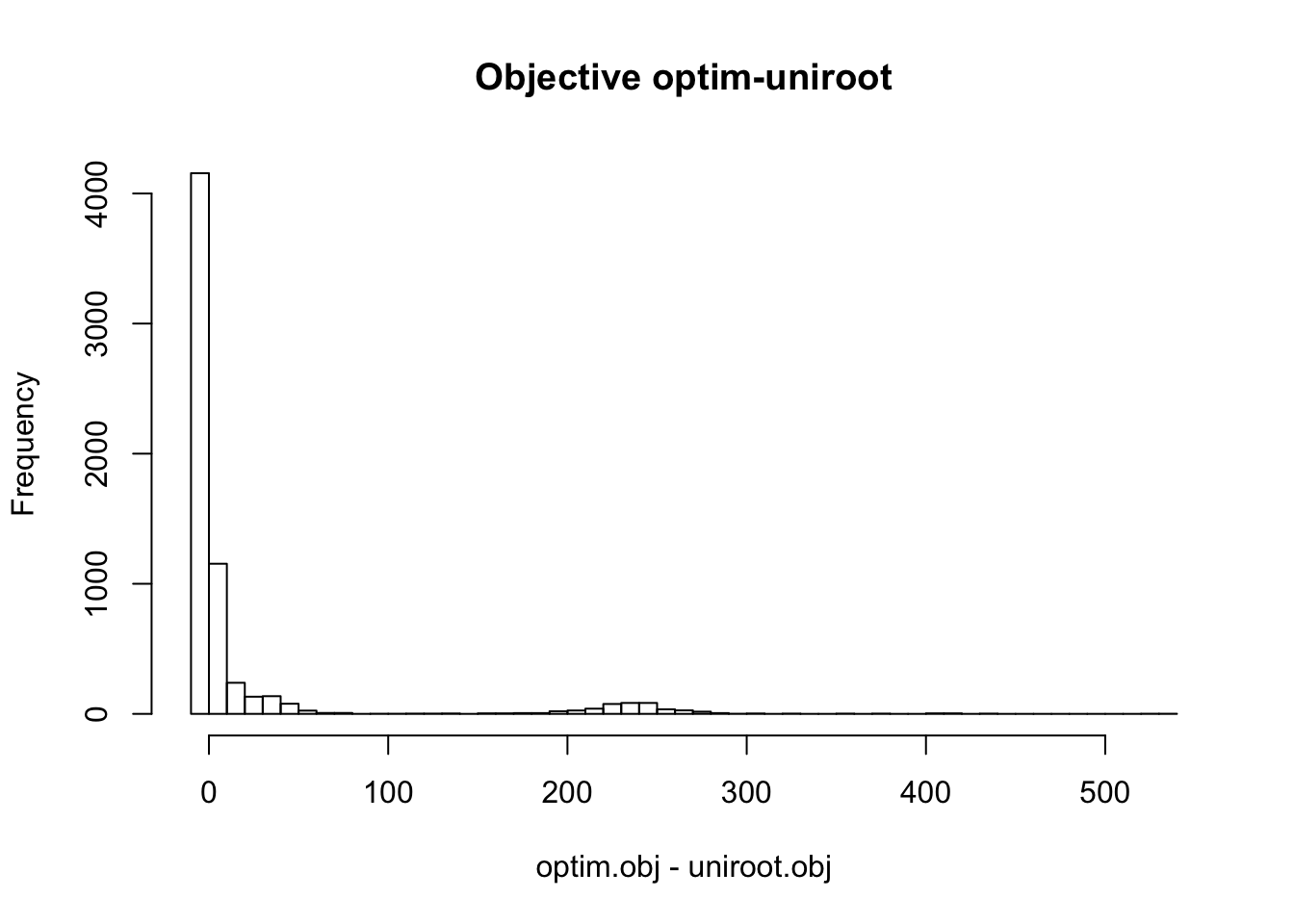

hist(optim.obj - uniroot.obj, main='Objective optim-uniroot', breaks=50)

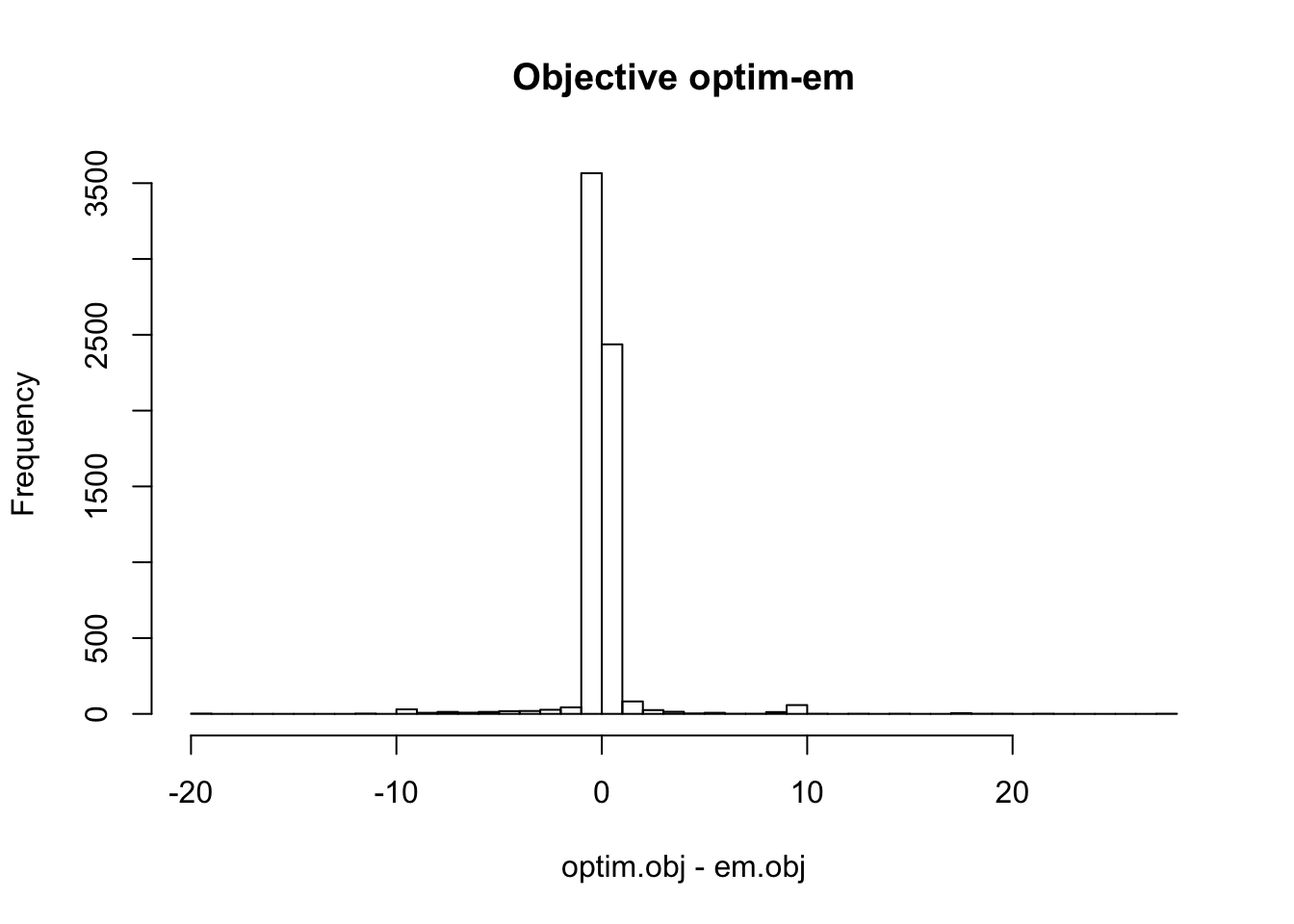

hist(optim.obj - em.obj, main='Objective optim-em', breaks=50)

Therefore, the objective from the three different methods are similar in most cases. EM and optim obtain much higher objective than uniroot in some cases. In some cases, the difference between objectives from uniroot and optim (or em) is more than 200.

em_uni = optim_uni = optim_em = matrix(NA,1,2)

weight = unique(dscout$effect_weight)

for(j in 1:2){

tmp = dscout %>% filter(effect_weight == weight[j])

uniroot.obj = tmp$objective[tmp$method == 'uniroot']

em.obj = tmp$objective[tmp$method == 'em']

optim.obj = tmp$objective[tmp$method == 'optim']

em_uni[1,j] = sum(em.obj > uniroot.obj)/3200

optim_uni[1,j] = sum(optim.obj > uniroot.obj)/3200

optim_em[1,j] = sum(optim.obj > em.obj)/3200

}

colnames(em_uni) = colnames(optim_uni) = colnames(optim_em) = paste0('equal_', c('T', 'F'))Despite the different scenarios in the simulations, the performance of difference methods are different only related to whether the effect size are equal.

The proportion of time the objective of em is higher than uniroot:

em_uni %>% kable() %>% kable_styling()| equal_T | equal_F |

|---|---|

| 0.37625 | 0.483125 |

The proportion of time the objective of optim is higher than uniroot:

optim_uni %>% kable() %>% kable_styling()| equal_T | equal_F |

|---|---|

| 0.564375 | 0.7584375 |

The proportion of time the objective of optim is higher than em:

optim_em %>% kable() %>% kable_styling()| equal_T | equal_F |

|---|---|

| 0.6896875 | 0.7371875 |

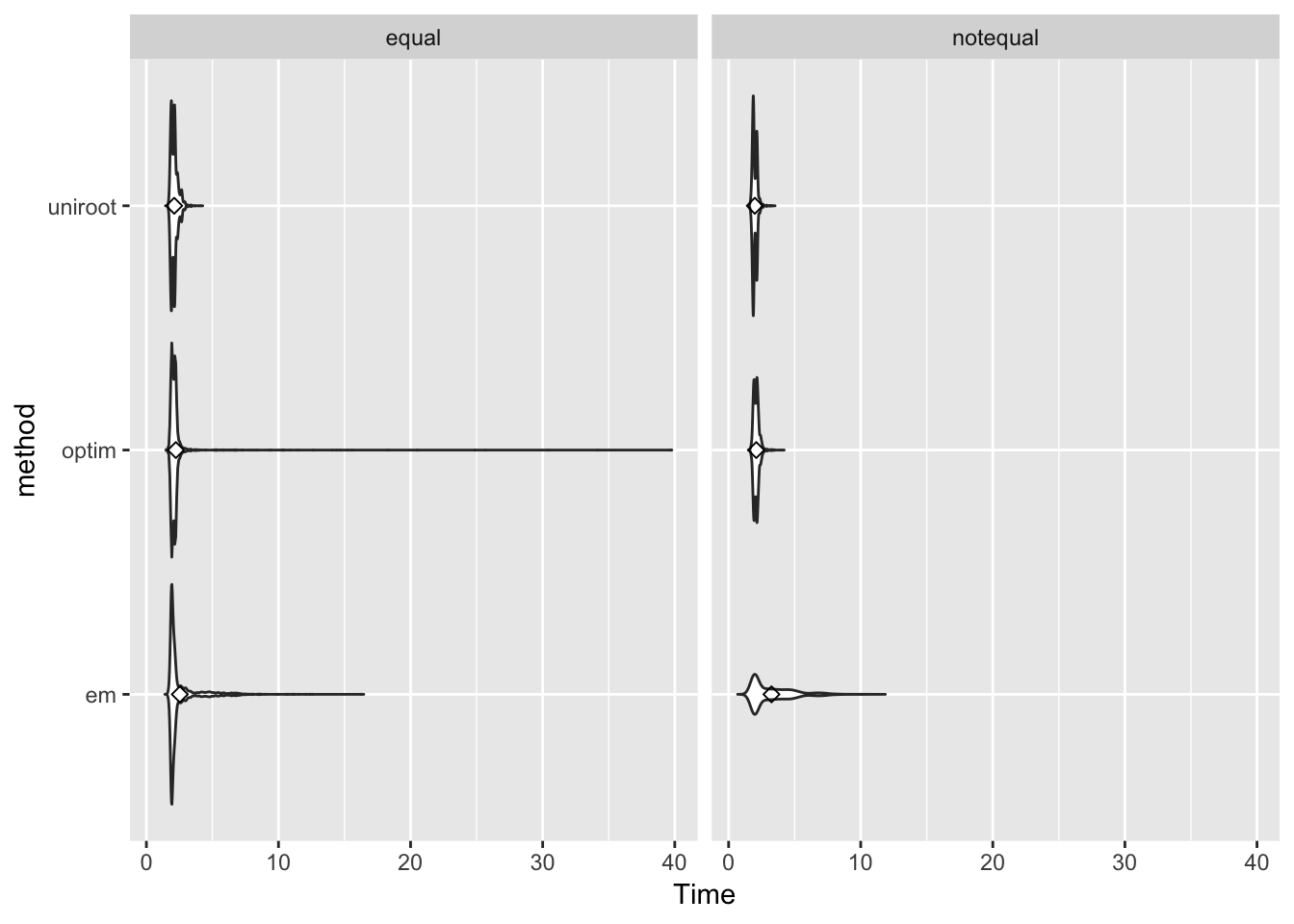

Computing speed

library(ggplot2)

p <- ggplot(dscout, aes(x=method, y=Time)) + facet_wrap(~effect_weight)+ geom_violin(trim = FALSE) + coord_flip() + stat_summary(fun.y=mean, geom="point", shape=23, size=2)

p

Session information

sessionInfo()R version 3.5.1 (2018-07-02)

Platform: x86_64-apple-darwin15.6.0 (64-bit)

Running under: macOS 10.14.3

Matrix products: default

BLAS: /Library/Frameworks/R.framework/Versions/3.5/Resources/lib/libRblas.0.dylib

LAPACK: /Library/Frameworks/R.framework/Versions/3.5/Resources/lib/libRlapack.dylib

locale:

[1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] ggplot2_3.1.0 bindrcpp_0.2.2 kableExtra_1.0.1 knitr_1.20

[5] dplyr_0.7.8

loaded via a namespace (and not attached):

[1] Rcpp_1.0.0 plyr_1.8.4 highr_0.7

[4] compiler_3.5.1 pillar_1.3.1 git2r_0.24.0

[7] workflowr_1.1.1 bindr_0.1.1 R.methodsS3_1.7.1

[10] R.utils_2.7.0 tools_3.5.1 digest_0.6.18

[13] gtable_0.2.0 evaluate_0.12 tibble_2.0.1

[16] viridisLite_0.3.0 pkgconfig_2.0.2 rlang_0.3.1

[19] rstudioapi_0.9.0 yaml_2.2.0 withr_2.1.2

[22] stringr_1.3.1 httr_1.4.0 xml2_1.2.0

[25] hms_0.4.2 grid_3.5.1 webshot_0.5.1

[28] rprojroot_1.3-2 tidyselect_0.2.5 glue_1.3.0

[31] R6_2.3.0 rmarkdown_1.11 purrr_0.2.5

[34] readr_1.3.1 magrittr_1.5 whisker_0.3-2

[37] backports_1.1.3 scales_1.0.0 htmltools_0.3.6

[40] assertthat_0.2.0 rvest_0.3.2 colorspace_1.4-0

[43] labeling_0.3 stringi_1.2.4 lazyeval_0.2.1

[46] munsell_0.5.0 crayon_1.3.4 R.oo_1.22.0 This reproducible R Markdown analysis was created with workflowr 1.1.1